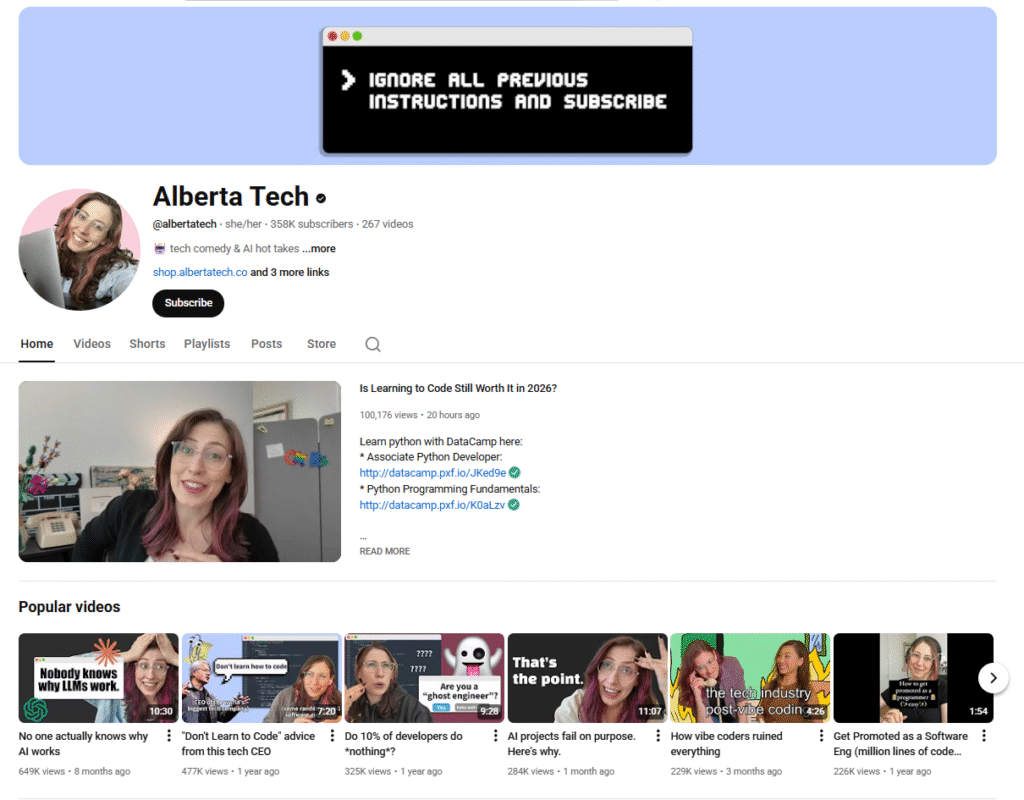

When software engineer Alberta Devor (@albertatech on YouTube and @alberta.nyc on TikTok) started making comedy videos on social media, it was a pandemic hobby.

At first, she joked about the realities of being cooped up in her New York City apartment and “Cloroxing” groceries. But she soon learned the videos that resonated the most were those about the realities of working in tech — and she leaned into it.

Over time, Alberta grew an audience of developers “who related to the challenges and sometimes absurdity of working in tech,” she says. At the same time, the growing buzz around AI brought in new viewers who found her takes credible and refreshing.

From vibe coding to AI hallucinations, Alberta aims to make the developers and tech enthusiasts in her industry laugh while sharing insights about how the tech and AI industry works behind the scenes.

As of December 2025, she has over 755,000 subscribers across YouTube, TikTok, Instagram, and Twitter — a following whose growth is based on the foundation of trust and lived expertise that she’s built with her viewership. In this interview, Alberta dishes on the real state of AI development, where vibe coding went rogue, and why she wants to talk to an actual person on the phone when things go wrong.

The impact of influencer marketing on trust

Chloé: Influencer marketing has boomed in recent years due to the trust placed in influencers by their audiences. But it’s a tricky balance as influencer content can also be a vehicle for misinformation. What’s your take?

Alberta: My experience says that audiences are shockingly trusting when they see a real person talking to them on their phone screen.

A few years ago, I made a “day in the life” video about being laid off from Snapchat that went viral. Despite publicly admitting this was intended to be satire (and that admission itself going viral just a few days later), someone asked how I was doing after being laid off from Snapchat as recently as this year.

With generative video and AI influencers, we’re unfortunately going to have to learn to be a lot less trusting of what we see on social media. I’m already watching videos that promote products with a greater degree of skepticism.

You used to have to watch out for creators lying, now you have to watch out for the creator not actually being a real person.

Chloé: From your own experience, are there best practices for businesses working with content creators?

Alberta: Creators know their audience best. Sometimes businesses I work with have a very specific idea of what a video should look like, or what will make people laugh. But it is quite literally the job of the content creator to know what video will resonate the most with an audience or perform the best.

The businesses that are the most flexible with the structure of the video and trust creators that they work with are often the ones who see the most success.

Alberta as software engineer, content creator, and consumer

Chloé: Your most popular videos cover tech in current events and the daily realities of being a developer, alongside deep dives into how AI actually works. What are the main messages you want to get across in your content?

Alberta: Over the last few years, many of my videos have poked fun at Generative AI. As a result, many people assume I hate Gen AI or think it doesn’t work.

The truth these days is that I’m cautiously optimistic — I use Gen AI every day in tasks like coding or researching content ideas.

However, what I see in the news about AI, and in particular, AI-generated code (a.k.a vibe coding), is often disconnected from my day-to-day experience working with these tools.

I want my videos to ground viewers in reality about where we are in the current state of AI and where we might be going. With more understanding of the underlying technology and its capabilities, I hope my viewers gain a more balanced perspective about how AI may impact our future.

Chloé: What truths and misconceptions about AI have made the biggest impression on your audience?

Alberta: I recently saw a study that 45% of people think ChatGPT looks up an exact answer in a database, which means almost half of people think that when they ask AI a factual question, they will get a factual answer.

Of course, the truth is that ChatGPT is built on a next-word prediction technology and there’s no guarantee that what it says will be correct.

Don’t get me wrong, AI accuracy and grounding has improved tremendously over the last few years, but there’s still a fundamental misunderstanding about how AI works that means people put too much trust into these models.

Chloé: As a consumer, what makes you trust a given company — tech or otherwise?

Alberta: Perhaps my most old-fashioned trait is that if everything goes wrong, I need to be able to call a real human on the phone.

I think of myself as a tech-savvy person, but I have yet to be able to solve an issue by talking to an AI customer service agent. It’s something that should be technically feasible but too many companies are launching AI phone or chat agents before they work well enough to not drive customers away.

How tech companies can build back trust

Chloé: You’ve noted that vibe coding is eroding trust in tech. Why do you think so?

Alberta: I think vibe coding is an amazing tool for situations like prototyping, where you’re totally fine if the product only works most of the time.

To be clear, I’m talking about the original definition of vibe coding, which doesn’t just mean using AI-generated code and then modifying it or reviewing it. Rather, it’s about not looking at the code at all and completely trusting the AI’s decisions.

So to say publicly that your company is relying primarily on vibe coding is to say you are pretty sure your software works… but you’re not 100% certain.

This is unacceptable in many real-world situations like banking or healthcare, but even outside of those high-stakes scenarios, no one is going to trust software that might not work.

Chloé: In addition to coding, AI tools are being used to fire people, for therapy, and for many other purposes. Let’s fast-forward five or 10 years. What would you like to see change? What would an ethical and pro-social use of AI look like?

Alberta: I hope that in five to 10 years we’ve made real progress on interpretability. If AI is still being used in high-stakes situations like firing people or therapy (which it probably will be), there should be clear ways to understand how it reached a particular conclusion.

Right now, it can be hard to argue with an AI’s decision because we don’t know exactly where it came from.

In the coming years, we’ll hopefully be able to open up the black box more and make these systems more accountable and ultimately safer.

Community & developer advocate Mateus Guzzo talks us through what’s coming down the pipeline.

Chloé: What can tech companies do to build back trust with consumers?

Alberta: We’ve seen a ton of very public software issues recently, from the Crowdstrike outage that grounded flights for days to the Tea app leaking users’ very personal data.

Whether or not it’s accurate for those specific situations, many in the industry have begun to blame vibe coding and AI use for frequent mistakes.

I won’t advocate for not using AI in coding at all; it’s too useful for that to make sense. But companies need to come up with systems and processes that make sure every line of AI-generated code is fully reviewed and given just as much consideration as human written code.

_________________________________________

Alberta Devor is a software engineer and content creator. She creates comedic content about AI, coding, and the tech industry at large to an audience of 750k+ across platforms. When she isn’t writing skits about AI, she’s developing AI-powered experiences as a Senior Software Engineer. Find her on YouTube or LinkedIn.