How to build an AI-first organic growth system

In chapter one of this guide, we introduced you to the “visibility up, clicks down” pattern, and how discovery now starts in AI-generated answers.

For a long time, organic growth was just SEO: ranking, earning a click, and conversion on your site. That model still matters, but mostly at the bottom of the journey, when buyers are ready to act.

Things have changed. This is why we treat organic growth as one system with two interconnected components:

- Generative Engine Optimization (GEO) earns inclusion in the answers and third-party sources that shape buyer decisions before the click

- Search Engine Optimization (SEO) captures demand when buyers are ready to click and take action

Both are fed by the same fundamentals: clarity, structure, and trust.

But to create an organic growth system that protects traffic, pipeline, and revenue, you need to understand what AI engines actually do when a buyer asks a question.

In this chapter, I’ll break down:

Why ranking alone is no longer enough to guarantee visibility

How AI evaluates brands and content

How discovery and evaluation fan out across multiple channels

What a modern SEO and GEO system must include to keep your brand visible

Practical tips for implementing your own organic growth strategy

At a glance

- SEO and GEO now form one AI-first organic growth system. Visibility begins in AI-generated answers and converts through high-intent search; both must work together to protect your pipeline.

- Rankings no longer equal visibility. AI answer engines prioritize completeness, credibility, and cross-source validation over traditional position-one rankings.

- Query fan-out defines AI evaluation. To be cited, brands must cover functional, comparative, contextual, implementation, and economic decision facets.

- Extractable, answer-first content increases AI inclusion. Modular structure, question-led headings, schema, and clear claims improve citation likelihood.

- Entity clarity, technical legibility, and off-site validation drive trust. Consistent branding, crawlability, structured data, reviews, and comparison mentions shape AI recommendations.

- Reverse-engineer organic growth from revenue outcomes. Track decision-stage prompts, map visibility gaps, and align SEO and GEO to measurable pipeline impact.

Why you need to reverse-engineer to your desired organic growth outcomes

You don’t “do SEO” and “do AI search” as separate workstreams. You must design one organic growth system that starts from the outcome you care about and work backward.

Reverse-engineering from business outcomes has always been central to how we work at Skale. We start with the deepest measurable goal available: typically qualified trials, SQLs, or pipeline. Only then do we decide which levers to pull across SEO and GEO, brand, distribution, digital PR, conversion rate optimization (CRO), and AI visibility.

If your goal is to increase enterprise demos, you don’t begin with “What should we publish next?” You begin with revenue conversations: What comparisons come up in sales calls, what objections slow procurement, what buyers ask in ChatGPT, and what narratives dominate review platforms, LinkedIn, YouTube, and online communities.

At a high level, the planning process looks like this:

Define the north-star outcome

Pick the commercial metric you actually want to move, like SQLs, pipeline, or revenue. This gives the organic program a clear job to do.

Map the evaluation journey

What buyers need to understand, compare, and validate before they convert. Fan-out makes this practical. (More on this later.)

Identify where trust must exist

Both on site and across the sources buyers and AI systems rely on during evaluation, like review platforms and comparison articles.

Plan the compounding inputs

Positioning clarity, on-site legibility, evaluation-stage coverage, extractable structure, and off-site reinforcement.

In this model, SEO and GEO are fed by the same system. GEO shapes inclusion and preference earlier, when buyers are forming a shortlist. Then SEO captures demand when buyers are ready to click.

Why rankings alone no longer equal visibility

When someone asks a question in ChatGPT, Gemini, or Perplexity, or triggers an AI Overview, the system seeks to assemble a credible answer instead of searching for the top ranking page.

It breaks the prompt into related sub-questions, pulls from multiple sources, synthesizes what it considers to be a complete and credible response, then decides what sources are safe and useful enough to cite.

That’s why you can rank well and still be absent from AI answers.

In practice, when I see ranked pages being ignored, it’s likely a result of these three structural gaps:

Think topical coverage

You might have one good page, but the surrounding content cluster doesn’t exist or is poorly supported. From the model’s perspective, your brand doesn’t “own” the topic. So you might appear once, while competitors with broader coverage appear across multiple sub-queries, including functional, comparative, and implementation-based prompts. (I’ll explain this below.)

Missing comparative or economic context

Your content explains what something is, but not how it compares, what trade-offs exist, or how cost and risk should be evaluated. AI systems need all those layers to construct credible answers. If you don’t provide them, the model fills the gap with someone who does.

Poor extractability

Your answer may be buried, implied, or wrapped in marketing language. AI systems prefer clear, direct explanations they can reuse cleanly. If your key points take five paragraphs to uncover, it’s hard to reuse and less likely to be cited.

Rankings still matter, because Google is still where a lot of high-intent demand gets captured.

We saw this firsthand with Usercentrics. Amidst a shift to a zero-click search engine results page (SERP), clicks have clearly shifted to bottom-of-funnel (BOFU) pages where evaluator behavior was taking place. (And, importantly, we can prove that AI traffic is driving users to these high-intent pages.)

In summary: AI answers and the third-party sources they reuse shape what feels credible and safe before a click ever happens. Rankings no longer guarantee you’ll show up in these spaces where buyers now form their shortlist.

That means your organic growth strategy must now expand beyond thinking in keywords and relevance at the page level. You must also cultivate a presence across the spectrum of prompts and AI results that drive users through evaluation stages.

How AI evaluates brands and content

As you now know, AI platforms focus on question-level intent and ask, “What does a complete, credible answer require?”

To do this, large language models (LLMs) expand a single query into multiple sub-questions. This is called query fan-out.

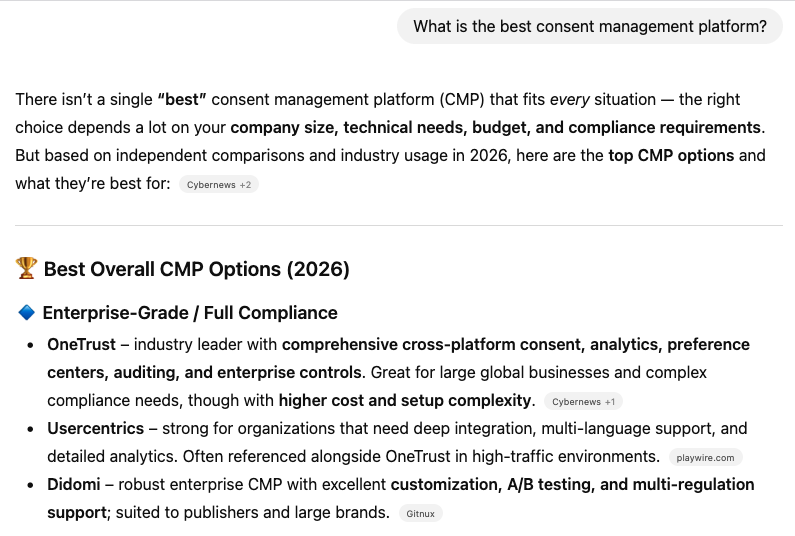

For example, when someone asks: “What’s the best consent management platform (CMP) for a SaaS business?” the system implicitly needs to answer:

What a CMP does

How different CMPs compare

Which are suitable for SaaS

How hard implementation is

What privacy compliance risks exist

How pricing and scale trade-offs work

Each of those sub-questions requires different types of sources. No single page can credibly answer all of them, and no brand that appears in only one slice will show up in all the answers where they need presence.

For each sub-question, AI tends to prefer content that:

Directly relates to the specific component of the decision (not related or generic content)

Demonstrates topical depth and coherence (sustained, comprehensive coverage of the theme across related pages)

Is easy to extract and reuse (clear claims, scannable structure, obvious takeaways)

Is consistent with how the brand is represented elsewhere (category, use case, product naming)

Is reinforced by third-party consensus (reviews, comparison content, credible editorial coverage)

This is where things like entity clarity, consistent brand positioning, extractable content structure, schema, and off-site validation come into play. Together, they help AI systems decide which explanations are safe to reuse when assembling an answer.

The key point is this: AI doesn’t pick the “best page.” It picks the best-supported answer.

We’ll unpack what “best-supported” looks like across the rest of this chapter, and go deeper on content execution and authority reinforcement in subsequent chapters.

Query fan-out explained and the model behind AI answer engines

You now know that AI systems use query fan-outs and draw from multiple sources to build a clear and credible answer.

Across SaaS and tech buying journeys, fan-outs usually cluster around five evaluation facets that cover the full decision journey (rather than focusing on one single prompt or segment).

To be cited — and ideally recommended — by AI systems, you must cover all five.

1. Functional

The functional facet answers the most basic question: Does this product actually solve the problem? For a consent management platform, that might mean explaining what a CMP does, consent collection, preference management, or privacy compliance basics.

Traditional SEO rewards strong product and educational pages. AI systems reward the same thing, but are stricter about clarity and tend to ignore vague positioning and marketing-heavy copy.

They look for explicit mentions of capabilities, defined use cases, and answer-first explanations that they can confidently extract and reuse.

2. Comparative

The comparative facet is where you provide context that enables AI systems to assess how your brand compares to alternatives.

For a CMP, that shows up as queries like “Usercentrics vs OneTrust,” “Didomi alternatives,” “best CMP” lists, and pros and cons breakdowns.

You can win SEO here with strong, editorially sound alternatives, and “best X” pages. LLMs often assemble comparisons from third-party listicles, reviews, and editorial summaries. If you’re absent there, you can be excluded even if your own pages rank.

3. Contextual

Content in the contextual facet helps users answer: Is this right for my situation? AI systems filter out generic positioning and look for specifics that match user needs.

Using Usercentrics as an example, content here could answer whether a CMP is a good fit for a SaaS vs e-commerce brand, outline EU vs UK vs U.S. CMP requirements, or explain enterprise vs SMB needs.

SEO success calls for unbiased “best for X” and segment pages. AI systems want this same context, but won’t trust a single source; they pull this nuance from multiple sources and cross-check them for coherence.

4. Implementation

Users and AI systems want detail around what it takes to roll out your product. Your content should outline the effort involved in setup. Be honest about common complaints or trade-offs, such as tool complexity, as well as expected time-to-value.

SEO treats implementation as a late-stage consideration. AI systems scrutinize implementation upfront when shortlisting tools, and may exclude options that they perceive as having rollout risk, like effort, complexity, or the chance of slow or failed implementation. Brands that avoid detail often lose trust here and don’t make it to shortlisting.

5. Economic

Finally, you need content that outlines costs and any trade-offs. Clarify your pricing approach, costs to scale the tool, and ROI expectations.

In traditional SEO, these details are often limited to pricing pages. AI systems foreground costing.

That’s because users want to sanity check costs and any downsides before they invest time evaluating tools. LLMs look for clear, defensible trade-offs (like how pricing scales and cost vs compliance risk) over vague “best value” claims.

What this means for businesses

Your organic growth strategy should cover all five evaluation facets. This applies to content both on and off of your site, and across all the questions your buyers have and that AI tools need answered in order to recommend you.

Remember, if your competitors cover the missing facets better than you do, AI will stitch them into the response, even if your product is objectively stronger.

6 key components of a winning organic growth playbook

Organic growth requires a coordinated system, which is why no single component wins on its own. AI visibility emerges when these elements reinforce each other across Google, AI-driven discovery, and the full buyer journey.

Here are the six key parts of an organic growth playbook that drives AI visibility and, in turn, pipeline.

1. Consistent branding

Keeping your brand and positioning aligned across all your web properties has always been a challenge for businesses.

Between product changes and messaging shifts, resources aren’t always available to keep content up to date. These inconsistencies already confused Google and other search engines, and AI search has raised the stakes.

AI systems generate answers and summaries based on coherence across multiple data sources. This goes for both owned content and third-party sources.

Pages on your own site with old product naming, legacy positioning, and stale use cases create the same category drift and confusion as outdated information on a review site or online forum.

When that happens, AI systems may:

- Exclude your business entirely

- Hallucinate incorrect information to users

This can directly reduce your share of AI voice, meaning you end up losing out to competitors that AI systems see as better defined and more trustworthy.

Even the best managed brands have inconsistencies. A few I commonly see are:

Vague naming conventions

Unaligned visual branding

Incomplete or conflicting product messaging

Inaccurate or inconsistent listings

Lack of alignment across channels

How to achieve entity clarity

Before AI systems can recommend you, they need a clean, consistent understanding of who you are, what you do, and who you serve.

Follow these seven steps to help improve entity clarity.

Audit brand and product messaging

Review the places that AI systems commonly pull from, like your homepage, product pages, pricing, help docs, blog, social bios, video descriptions, listings, directories, and review sites.

Create a canonical brand description and product narrative

Define a brief company description, ideal customer profile (ICP) and use cases, key claims, approved proof points, and exact product and feature names, then align every relevant page to that source of truth.

Optimize About and author bio pages

Clearly state who you are, what you do, and why you’re credible. Summarize your mission, expertise, and track record, and ensure team bios reinforce authority by listing relevant experience and achievements.

Use structured data to reduce ambiguity

Add schema where it improves interpretation. Common starting points include Organization, Product or SoftwareApplication, Person, Article, FAQPage, and breadcrumbs.

Standardize listings everywhere

Keep your name, category, description, locations, hours, and links consistent across major platforms.

Build and manage reviews

Encourage reviews, then respond consistently in your brand voice.

Extend your web footprint strategically

Focus on a few channels where buyers actually research, and show up with consistent positioning, clear descriptions, and verifiable proof points from trusted sources.

We’ll return to how this plays out across off-site sources and category consensus in a future chapter. For now, the key point is simple: If your brand can’t be categorized cleanly, the rest of the system has nothing reliable to build on.

2. Technical legibility and crawlability

Technical readiness is essential for visibility. If crawlers and AI agents can’t reliably fetch your pages, or understand their structure, even strong content will be ignored by Google and AI systems alike. On-site legibility usually comes down to four areas:

Crawl access and indexability

Machine-readable context (schema and metadata)

Structural clarity and hierarchy

Trust and attribution signals [Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T)]

Crawl access and indexability

Before anything else, AI and search systems need to be able to reach your content.

That means key pages:

Return a clean 200 status code, without redirect chains, broken URLs, etc.

Aren’t blocked by robots.txt rules or noindex tags

Use canonical tags that point to the preferred version of the page

Render important content in the crawler-visible version of the page, not behind scripts, tabs, or interactions that machines may not execute

Allow the AI crawlers you want included, like GPTBot, Google-Extended, and PerplexityBot

Don’t sit behind login walls or bot protection rules that block legitimate crawlers

For most teams, once you’ve set this up, you won’t need to do this again unless your site architecture changes.

Machine-readable context (schema and metadata)

The next step is adding schema and metadata so AI systems can interpret what a page represents.

Schema is structured code that defines what a page is and how the entities on it connect. For example, schema can state that a page is an article written by Jane Doe (person), who works for Company X (organization), and that it was published on Date Y.

Metadata is the descriptive information attached to a page that helps systems understand how to frame and present it. This typically includes the meta title and meta description, which signal the page’s topic, intent, and positioning in search results.

This is especially important for AI visibility because, unlike Google, LLMs use these elements to decide if they will actually read the page contents. They should make it easy for AI systems to figure out:

What is this page?

Who wrote it?

What entity is it about (a company, product, concept, or person)?

What type of content does it contain?

In practice, adding schema and metadata usually involves:

Article schema on long-form content

FAQ Page schema where visible Q&As already exist

Author or person schema tied to real experts

Accurate, descriptive meta titles and descriptions that reflect the page’s intent

This work can typically be completed in a focused sprint.

Structural clarity and hierarchy

AI systems don’t read websites the way humans do. So the next step is to focus on making the structure of your content easy for machines to read.

Pages that perform typically have:

A clear H1 tag that states exactly what the page is about

Logical H2 and H3 tagged sections that each address a single idea

Consistent templates across similar page types (guides, product pages, comparisons, etc.)

A strong internal linking structure that reinforces topical authority and helps Google and AI systems to understand your site’s focus

I want to touch on internal links for a second. In addition to mapping relationships between pages, internal links help systems discover and index pages. Internal links are crucial for both SEO and GEO.

When it comes to internal linking, focus on three things:

Prioritize contextual links over “menu links”

Links placed within the body copy carry more meaning. They show topical relevance and help connect concepts across pages.

Pillar and cluster linking

Link supporting pages to and from a central pillar page so the topic hierarchy is explicit and the main guide is clearly established as the hub.

Clear anchor text

Use descriptive anchor text that makes the destination and relationship unmistakable.

Finally, don’t let pages become orphaned. Every important page should have at least a few internal links pointing to it so search and AI engines can reliably find, crawl, and reference it.

Trust and attribution signals (E-E-A-T)

Finally, AI systems look for signals that content is written by someone credible, attributable to a real person or organization, and consistent with what’s published elsewhere.

On site, that means:

Clear author bylines on relevant content

Detailed author bios that explain why the author is qualified to write on the topic

Links to credible profiles or proof of experience (e.g. LinkedIn, publications, speaking engagements, and certifications)

Consistent naming of products, features, and categories across the site

E-E-A-T matters even more in regulated or high-trust categories like data privacy, where Usercentrics operates. Strong credibility signals often determine whether AI systems include your content in summaries and recommendations or ignore it.

The key point is this: technical SEO is still just as important in the AI search era. Once your technical foundations are in place, they support everything else:

- Content becomes easier to extract and cite

- Content clusters perform more consistently

- Off-site reinforcement has more impact

- Both SEO and AI visibility stabilize

We’ll include an AI readiness checklist and sequencing guidance in an upcoming chapter to help your team execute each step without turning this into a sprawling technical program.

3. Coverage across evaluation journeys

AI systems don’t reward depth in one narrow slice of the customer journey. They favor brands that consistently show up wherever buyers are, from when they’re seeking information on how to solve a problem to when they’re ready to evaluate solutions.

Many marketing teams publish content without an overarching strategy — a guide here, a blog post there, and maybe a comparison page if sales asks for it.

Individually, these pages might be solid, helpful, and relevant to your ICP. But systemically, the coverage is incomplete, disjointed, and fails to convey authority on a given topic.

In a resilient SEO and GEO system, coverage is planned against your customer’s evaluation journey, not against isolated prompts, or even disjointed topic clusters. In other words, topical coverage centers on intent completeness.

As I mentioned above when explaining query fan-out, this means ensuring your ecosystem includes functional, comparative, contextual, implementation, and economic content.

Not every piece of content has to live on your blog. Some of this information is more appropriate on product and pricing pages, in guides, or even off-site in content published by third parties.

What matters is that, taken together, the system leaves fewer unanswered questions for the buyer, and fewer gaps for AI to fill with someone else.

What this looks like in practice

For most teams, improving coverage across evaluation journeys doesn’t mean you have to publish dozens of new pages.

More often, this involves:

Identifying which evaluation facets are underrepresented

Expanding or refreshing existing content to close those gaps

Determining where coverage from third-party sites is needed

Connecting content across your site through internal linking

Prioritizing late-journey and high-risk questions that cause buyers to hesitate

4. Extractable content structure

After you’ve planned out your full-funnel content strategy, work on content extractability. Let me explain what I mean here.

Answer engines scan for self-contained units of meaning, i.e., sections that clearly answer a question or resolve a decision point on their own.

If answers are buried in long paragraphs, inconsistent sections, or narrative-heavy formats, they struggle to translate this information cleanly into answers.

We refer to this as modular, extraction-ready content.

How do you design content that AI can extract?

Extractable structure requires you to focus on creating content that’s clear, helpful, and easy to understand. Here are a few tips:

Add a short TL;DR section or key takeaways

Include a quick summary near the top, and add a concise bullet list or tables to clarify your point.

Modular structure

Break pages into clear, self-contained blocks that are usually around 150–300 words. Each is focused on a single idea. A reader (or AI system) should be able to land on a section and understand it without needing to read the entire page.

Use question-led headings

Write H2 and H3 tags that reflect real buyer queries, with a clear hierarchy that makes the page easy to scan.

Answer-first sections

Start these sections with a direct response to the question. Don’t warm up for three paragraphs. State the point early, then earn the reader’s attention with detail and examples.

The section you have just read is a good example of a modular content structure. Here is another example we used for Usercentrics.

Good content structure has always been an essential SEO tactic because it improves readability, engagement, and featured snippet eligibility.

And AI visibility and snippets aside, extractable content is easier for humans to read and digest on a screen (especially on mobile) so it’s a win-win.

We’ll go much deeper on how to design content this way, including examples and patterns, in an upcoming chapter. For now, remember that if AI systems can’t extract your thinking cleanly, they won’t use it, no matter how strong the underlying insight is.

5. Off-site trust signals

AI systems rely on off-site signals from third-party sources to cross-check your claims and validate accuracy, relevance, and consensus.

Google has always done this to some degree, which is why link building is a pillar of a successful SEO strategy.

What’s changed is how directly off-site signals influence AI answers; off-site trust is now about where you appear and how you’re described, not just how many links point at your domain.

In traditional SEO, the value of a link placement is often judged by metrics like domain rating (DR) or referral traffic.

In modern GEO, a mention on a highly cited comparison page can matter more than a high-DR link, and being present inside the sources AI already reuses outweighs raw link equity.

AI engines favor brands that appear consistently across trusted, category-relevant sources, including:

Review and comparison platforms where customers validate use cases, weigh pros and cons, and share lived experience with your product

Editorial listicles and category roundups that contextualize the market and provide a shortlist of options

Contextual brand mentions in relevant guides and discussions that explain why a product is used

What’s more, off-site GEO work is less about chasing individual placements and more about reinforcing a coherent narrative across the web.

AI platforms assess every source your brand is mentioned in to form an opinion about it. So you need to be sure that third-party sources talk about your brand the way your brand talks about itself. Messaging consistency across sources plays a huge role in how accurately LLMs characterize you, and if they will mention you at all.

When your brand positioning and owned content are strong, third-party reinforcement acts like a multiplier that helps increase visibility in AI answers and drive better-qualified traffic when buyers are ready to click.

6. AI prompt tracking

Alongside your strategy development, you’ll work on prompt selection and tracking. It enables you to monitor how your brand shows up in the AI searches your ICP makes.

We use our internal AI monitoring tools to track AI visibility across platforms like ChatGPT, Perplexity, Google Gemini, and Microsoft Copilot.

My first tip: Don’t approach AI prompt tracking like traditional keyword tracking.

The goal isn’t to mirror branded search demand or inflate coverage with endless variations. And in most cases, we avoid tracking purely branded prompts unless they reflect genuine comparison or evaluation behavior where decision intent is clearly present, like in a “brand vs competitor” prompt.

Instead, we build a tightly curated, decision-relevant prompt set grounded in ICP behavior and real buying context.

At Skale, we track well-distributed prompts that cover discovery, capability validation, comparison, and risk-proofing stages. These prompts should be balanced across decision depth:

25–30% should focus on discovery

25–30% should focus on capability validation

20–30% should focus on active comparison

The remainder should address risk-proofing to ensure no single stage dominates the set of prompts you track

This ensures you’re measuring AI influence across the full buying journey, not just replicating SEO logic inside an LLM environment. And every prompt should pass a quality check. Ask yourself:

- Which persona would ask this?

- Which use case does it represent?

- Which decision moment does it map to?

If you can’t clearly answer those three questions, the prompt doesn’t make the cut.

When you track the right prompts, you’ll get reliable, actionable AI visibility insights that can be trusted over time.

Of course, you need to track AI prompts along with SEO performance, like rankings and clicks, for a holistic view of your organic growth performance.

But don’t stop there: be sure to tie these metrics to the indicators that really matter for your brand, like SQLs and pipeline growth. (More on that in an upcoming chapter of this guide.)

How to incorporate GEO into your organic growth strategy: getting started and continuous monitoring

Where should you begin when developing an organic growth strategy? A brand audit and query fan-out are good starting points. Then, you need to run ongoing checks and audits. Here are my tips for each stage of the process.

Get started with initial evaluation

You have to evaluate where your brand stands in terms of visibility, both on AI platforms and beyond.

A holistic brand audit helps with this. Here, you’ll evaluate your current brand coverage and share of voice.

Brand coverage measures how comprehensively your brand appears across your category’s decision moments. Look beyond search rankings to evaluate coverage in AI answers, comparison pages, communities, review platforms, and “best X for Y” queries.

This enables you to determine whether your brand consistently shows up as a credible choice when buyers explore, compare, or validate options.

And remember, third-party content, review platforms, online communities, and social media all contribute to how you perform in AI searches, which is part of the reason I recommend evaluating them in your brand audit.

How to evaluate brand coverage

Set up monitoring in your AI tool for relevant prompts, Google results for relevant keywords, review platforms, and social.

Evaluate how much coverage you receive and what kind (positive vs. negative sentiment, source authority, topical relevance, etc.)

Determine where your brand shows up the strongest and where it’s lacking visibility.

Share of voice, by contrast, measures your exposure relative to competitors within a defined channel, be it AI mentions, paid ads, or keyword rankings.

You can dominate paid search or rank well organically and still be missing from AI summaries, vendor comparisons, or peer discussions. And if you aren’t included in those recommendation layers, you’re letting competitors fill that space.

How to evaluate share of voice

Define which competitors you’ll track against (approx. 2–3 hours of work)

Measure your mentions vs. competitor mentions in the same environment you monitor for brand coverage (approx. 2–3 hours of work)

Divide your brand’s mentions by total mentions across your competitive set (approx. 2 hours of work)

Brand coverage and share of voice both measure your general visibility across various channels. Now it’s time to strategize how you will secure visibility across the full decision journey using query fan-out.

How to map fan out for a topic

Start with one commercially meaningful buyer question, the kind that shows real evaluation intent, not a generic prompt. For a CMP category, that might be “best consent management platform for SaaS,” or “CMP pricing and implementation.”

Next, map how AI expands that single question into the five recurring evaluation criteria we discussed above: functional, comparative, contextual, implementation, and economic.

Then validate your map against observed AI behavior (not assumptions). Run the same decision query across distinct LLMs, like ChatGPT, Gemini, and Perplexity, and capture:

Which evaluation facets dominate in AI results

Which sources are repeatedly cited

Which competitors show up (and where)

Whether your brand appears consistently across evaluation facets or only in one slice

If you want to speed this up or monitor it over time, many AI monitoring tools support fan-out testing. But manual cross-platform checks are still the most reliable way to see what models are actually doing in your category.

Next, do a fast gap check using your SEO toolset and site data.

Use Ahrefs to keep your query shortlist grounded in commercial relevance. Screaming Frog and GSC/GA4 can show you whether you actually cover all the key facets and that the relevant pages are connected through careful internal linking.

Finally, analyze your owned and earned sources:

Which facets are credibly answered on-site in a way that’s easy to reuse?

Which facets are answered off-site (reviews, listicles, comparisons you’re absent from)?

Where does AI default to competitors because your coverage is thin, disconnected, or hard to extract?

This is front-loaded work with a light maintenance cadence. Start with a full fan-out mapping round (around 20 priority queries, with deeper maps for your top five).

Then, revisit it quarterly, or sooner, if AI referrals shift materially, your positioning changes, or competitors start showing up in facets where you’ve been absent.

Defend your position with ongoing checks and auditing tasks

Your AI visibility shifts as platforms evolve, competitors publish new content, and query patterns change. That’s why you need to run regular, ongoing checks to maintain AI visibility.

Here’s what to do:

Start with a quarterly AI visibility review.

Select your top 15–20 commercial queries and test them across ChatGPT, Gemini, and Perplexity. Document whether you’re cited, which competitors appear, and what types of pages are referenced. The point is to test a consistent sample so you can spot patterns and changes over time.

Review query fan-out coverage for priority topics

For your top clusters, map whether you adequately cover the five core facets: functional, comparative, contextual, implementation, and economic. If competitors are cited for facets you don’t address, that’s a clear content gap.

Run a technical audit twice per year

Confirm AI crawlers are allowed, key pages return clean 200 status codes, schema is valid, and internal linking is strong.

Monitor entity consistency and brand mentions monthly

Finally, track how your brand is described across review sites, directories, and cited pages. Watch for category drift, inconsistent product naming, or competitor share of voice gains.

Ideally, this process should become a structured 90-day loop: test, diagnose gaps, implement improvements, then retest.

Visibility is a long-term investment, not a one-time hack

If this chapter leaves you with one takeaway, let it be this: SEO and GEO aren’t separate. They are inputs into the same growth system.

Organic growth is less about doing one big thing and more about doing the right small things consistently. This compounds over time and protects your traffic in an era when search doesn’t behave the way it used to.

In a modern organic growth playbook, the goal is to earn a place on the shortlist before a buyer ever lands on your site. That only happens when your brand is easy to categorize, your content is easy to pull answers from, and other credible sources reinforce your claims.

It also means you show up with clear, defensible detail when buyers look for practical information about how your product works, what it costs, and which problems it addresses.

Next up, we’ll focus on the lever you control the most directly: content. In upcoming chapters we will explain how to structure content so it’s easy to extract, genuinely useful during evaluation, and more likely to turn visibility into a qualified pipeline.