Why Quality Content Is a Traffic Moat

In chapter one, you learned all about AI-first search behavior and the click squeeze that’s plaguing many brands right now.

In chapter two, you saw how AI systems assemble answers, and what you need to do to appear in front of your intended audience when they’re creating their shortlist.

Traditional search used to reward the single “best” page. AI systems work differently. They pull fragments from multiple sources throughout the evaluation journey, and that changes the role content needs to play.

AI systems need content that’s accessible, structured, retrievable, and credible enough to be used to generate answers and recommendations. Buyers, meanwhile, still need trustworthy sources they can return to when they move from AI-driven discovery into real evaluation.

That’s why content remains one of the strongest defensive assets your brand can build.

If your content isn’t clear enough for LLMs to extract, or trustworthy enough to safely cite, it won’t appear in AI answers.

And if it’s not credible enough for discerning buyers, it won’t do you any good when they finally click through to your site to validate what the LLM has told them.

Your job is to build content that AI systems can extract and cite, and that buyers trust enough to act on.

I’ll walk you through the process of picking the right targets and building out comprehensive content clusters that build brand authority. Then, I’ll explain how to optimize content for specific LLMs, and most importantly, how to build credible, authoritative, and high-trust content.

At a Glance

- Content now shapes SERP performance, AI visibility, and buyer confidence. Strong content earns placement in AI-generated answers while giving buyers the depth and clarity they need when evaluating options.

- Publishing more content won’t hold up in today’s search environment. Generic, repetitive articles are easy to produce and easy to ignore. Content earns results through genuine insight, originality, and trust.

- Topical authority is built through connected content clusters. Brands gain stronger visibility by covering the full evaluation journey across a topic, with each page answering a distinct buyer question and supporting the others.

- Buyer-focused content does more work than broad informational content. Pages that address implementation, trade-offs, cost, risk, and fit are especially valuable in technical categories. They help serious buyers make decisions.

- Clear structure improves both readability and AI extractability. Question-led headings, modular sections, answer-first writing, and skimmable formats like tables or TL;DRs make content easier for customers to navigate and for AI engines to cite.

Why Publishing More (Without a Clear Plan) Can Actually Damage Trust

For a long time, we treated informational SEO as a straightforward growth opportunity. Publish enough articles targeting the right keywords and, in theory, your traffic compounds.

It worked, sort of. You could cover a wide range of early stage queries, win rankings, and pull in a steady stream of top-of-funnel visits.

But even then, this was a cheap tactic aimed at boosting vanity metrics like clicks and traffic. Too often, the top ten ranking articles were variations of the same thing: the same structure, the same examples, and the same advice, just reworded.

The difference now is that AI systems are good at absorbing and summarizing this informational content, whether that’s in an AI overview or a chat-based answer.

Users can get the gist without needing to click through to your site. So in many cases, the purely informational layer has been commoditized by AI.

At the same time, generative AI has lowered the cost of publishing. What used to require days of careful research and writing can now be produced in minutes. This replicates the same failures of the old content farm model.

Whether you use AI or isolated and inexperienced writers who lack context to scale production, you risk trading your brand’s credibility for cheap content.

“The old model optimized for indexation and keyword coverage, not for information value,” explains Skale’s Organic Growth Lead Kristina Pantelic. “But AI systems don’t reward volume; they reward clarity, authority, and extractable information.”

So without a tight strategy and an experienced editorial team connected at every stage, you end up with thin coverage that adds little expertise and information gain, and recycles the same stats, facts, and takes. This leads to a loss of trust that eventually forces you into human-led rewrites, sometimes sitewide.

In trust-heavy categories like compliance, cybersecurity, or finance, that causes real damage. Weak content both underperforms and makes your brand less credible.

That matters both before and after the click. AI systems are more likely to surface content they can treat as reliable, and buyers in high-trust categories are far more likely to scrutinize what they read before moving forward. I’ll unpack what that means in the next section.

That’s why the strategic role of content has changed. AI engines explain the topic, but high-trust content still shapes the final decision. That’s exactly why content now requires more originality, more discernment, and higher trust than ever before.

In practice, that may mean you publish slightly less, but the content you do publish does far more work.

Why AI Systems Still Rely on SEO-Friendly Content

While the approach you should take to building out your content program has changed, the underlying goal is still the same: create content that adds value, and is easy for both readers and search engines to understand.

AI systems look for many of the same foundations traditional search engines like Google have always relied on, so showing up in AI search still depends on good SEO practices.

Before a page can influence an AI answer, the system has to be able to find it, understand it, extract from it, and feel confident referencing it.

That means content must be:

Technically retrievable

Pages need to be crawlable and indexable. They should also be supported by internal links that make them discoverable and contextualize how they relate to the rest of your site.

Structurally clear

Pages that have question-led headings, modular sections, self-contained explanations, and obvious hierarchy are easier for AI systems to categorize and cite.

Semantically explicit

Strong pages state clear claims, define terms, explain trade-offs, compare options, and provide examples that can be lifted cleanly into AI search results.

On top of that, content needs signals that it’s safe to cite, like credible sources and author credentials. There are two reasons for this:

- Trust for AI platforms: This calls for consistency, clarity, and supporting evidence so that the system is confident referencing your page.

- Editorial trust for humans: Ensure your page is accurate, useful, honest, and clearly written by people who understand the category.

This ties directly into Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T) requirements, which we’ll dive into later in this chapter.

These trust signals matter to Google, to LLMs, and to the people reading your page. They make content easier to rank, easier to cite, and easier to trust.

But before AI systems even evaluate a single page, they are also looking for something else: evidence that your brand covers the full evaluation journey.

This is where the query fan-out model from chapter two comes back in. If your ecosystem only answers one slice of the problem, your visibility in AI answers will be inconsistent at best.

Build a Content Plan That Mirrors the Evaluation Journey

As you already learned, AI systems evaluate brands across topic ecosystems as opposed to isolated blog posts. This calls for a cluster-by-cluster content approach, which is a foundational SEO principle. But you’ll need to adjust the way you build them to adapt to the AI search era.

A complete cluster isn’t just a collection of articles around a theme. You must move from asking which keywords are missing to which intent gaps you’re leaving open.

Remember how we ran through a query fan-out to determine the questions your buyer needs answered?

“Traditional keyword research tells you what people search for,” explains Kristina. “Query fan-out tells you how AI systems interpret and explain a topic. Those two things are not always the same.”

I like to think of clusters as a content system that helps buyers move from “What is this?” to “Is this right for us?” and eventually to “Are we ready to act?”

Your content cluster must cover all five evaluation facets: functional understanding, comparisons, contextual fit, implementation feasibility, and economic justification.

What a Complete Cluster Looks Like

At a practical level, a complete cluster usually includes four things:

One hub page on the core topic

Supporting pages mapped to distinct evaluation questions

Close internal linking between cluster pages

Clear routes to decision stage pages

The hub page establishes the main topic and often acts as the broadest, most comprehensive entry point. Supporting pages then go deeper on the key questions buyers ask as they evaluate your solution.

When your clusters are complete, comprehensive, and designed to move the reader through the full evaluation journey, you’ll see results like these.

These are the results of one of Usercentrics’ technical content clusters.

- Impressions climbed 73 percent QoQ

- Clicks more than doubled (101 percent) QoQ

Along with these metrics, Usercentrics saw an increase in high-intent behavior coming from AI traffic (meaning site visitors were doing things like checking out the pricing or demo page), plus an increase in AI search traffic of 27 percent QoQ. This is an example of the kind of content compounding we strive for.

This cluster answered multiple evaluation questions around a high-intent topic while demonstrating real topical authority. Even in a search environment shaped by zero-click behavior, the cluster remained resilient because it supported the full decision-making journey.

How to Avoid Cannibalization When Building Clusters

Each page in a cluster should answer a distinct decision question. That sounds obvious, but it’s something brands often fail to do. Companies regularly publish multiple pages that cover the same foundations without meaningful differentiation.

An ineffective cluster looks like this:

- CMP overview

- CMP guide

- CMP explanation

- What is a CMP

These pages are likely to repeat information: general insights into a consent management platform. They’d compete with each other and dilute the brand’s authority.

Instead, plan your clusters so each page has a clearly defined job (that you identified using query fan-out). If two pages answer the same question or fulfill the same user intent, merge them. Here’s how I would rework the above cluster:

- What is a consent management platform

- How CMP implementation works

- CMP pricing models

- CMP compliance requirements

- CMP alternatives or vendor comparisons

That’s very different from publishing several overlapping pages that all explain the same concept from slightly different keyword angles.

Remember, a strong cluster will expand your coverage and authority, instead of fragmenting it.

The Importance of Building Content That Actually Answers Buyer Questions

While you’re planning your content clusters, be sure to include content that helps buyers evaluate and disqualify a product.

Especially when it comes to technical categories, potential customers aren’t looking to be entertained, and they’re definitely not interested in reading the same recycled message they could find in any other article on page one of Google.

If you’re creating a technical cluster in a high-trust category, you’re going to have skeptical buyers. That means your content needs to answer questions like:

- Can this work in our stack?

- Will this meet compliance requirements?

- How complex is implementation?

- What are the trade-offs?

- Is the cost justified?

Content that avoids these questions often creates suspicion because it sounds like a marketing asset rather than a genuinely helpful review of tools or explanation of a platform.

On the flipside, strong evaluation content acknowledges limitations, explains caveats, and gives people enough detail to understand the operational reality of the product.

Proprietary insights are especially valuable here. Providing people with information they can’t find anywhere else is one of the clearest ways to stand apart from generic content.

You can achieve this by adding original, unique perspectives and opinions from your brand and team members to your content.

So where possible, contribute original data, real implementation lessons, or category-specific observations that come from a place of authority and expertise.

Last year, Skale decided to prioritize evaluation-stage guide content for Usercentrics. Instead of focusing purely on discovery queries, we created guides that addressed topics like:

- Regulatory requirements

- Rollout complexity

- Technical trade-offs

- Risk exposure

These pages went beyond merely introducing and explaining a topic to help buyers move closer to a decision. Thanks to creating content that served as helpful evaluation assets, guide clicks increased by 22 percent QoQ.

Why Biased Listicles Can Backfire

Quality listicles that compare products honestly and transparently are a great resource for helping buyers evaluate tools. When done right, they can address the buyer skepticism we’ve been talking about and build trust in your brand.

BOFU listicle content has long been a popular SEO tactic, and these articles have historically performed well on SERPs. But “best tools” articles have become riskier in the AI search era.

If it’s not approached the right way, this content can be thin, self-promotional, low-evidence, and low-value. At best, it may create short-term AI visibility, but over time, these low effort assets actually tend to weaken trust and credibility. And you may even be penalized for them.

AI search analyst Lily Ray documented significant volatility following the December 2025 Core Update, with several SaaS brands seeing sharp declines in blog-level Google visibility in January.

One pattern that appeared repeatedly across those impacted was heavy reliance on biased, low-quality “best tool” listicles, with some brands having thousands of these articles on their sites.

While Google didn’t explicitly confirm that it’s penalizing this kind of listicle, the logic is consistent with how the company’s review systems have evolved. Readers can tell when content is self-serving, and search systems can too.

But the impact here isn’t just in SERP performance. LLMs still depend heavily on retrievable, ranking content, and weak pages can hurt twice: they lose visibility in Google, and AI visibility often falls at the same time.

Content needs evidence, category nuance, and a reason to exist beyond “we want to rank for this term” if you want it to support a holistic organic growth and AI visibility strategy.

What High-Trust Content Actually Requires

As noted earlier, both readers and AI systems can tell when content is self-serving and low value. That’s why you need clear trust signals on the page.

They’re looking for

- Evidence that information comes from people with real experience in the category

- Explanations that are accurate and grounded in operational reality

- Proof that the content has been produced with strong editorial standards

In SEO, these ideas have been around for years in the form of Google’s E-E-A-T framework.

And yes, E-E-A-T is still important in the AI search era. Below, I’ll break down how each of these trust signals shows up.

Experience

In Google’s E-E-A-T framework, “experience” refers to how much first-hand involvement the author has with the topic they’re writing about. That might mean using the product, working in the industry, or dealing directly with the operational challenges being discussed.

Content that shows experience includes things like:

- Clear indications of hands-on experience with the topic

- Detailed, nuanced insights that come from practical application

- Real-life examples and case studies

- Content that reflects an understanding of common challenges or questions in the field

These details are hard to replicate without direct exposure to the topic. To include them in your content, speak to internal subject matter experts who work closely with the product and are familiar with the kinds of problems your customers face, as well as what it takes to implement your solution.

Expertise

While experience relates to the content creator’s first-hand experience in a field, expertise relates to their depth of knowledge in a specific topic.

In content, you can show expertise through:

- Accurate, up-to-date information

- Clear explanations of complex ideas

- Correct use of technical or industry-specific language

- Details that go beyond surface-level summaries

Expertise is also supported by showing proof of a writer’s formal credentials, certifications, published work, or recognized industry experience.

This can come from subject matter experts or experienced writers with a strong grasp of the category. With that said, in more technical categories (like consent management, for example), writers may really need that expert input to add depth, accuracy, and specificity.

For example:

Authority

Authority reflects how strongly a brand is respected as a thought leader within their niche. While expertise shows up in the quality of the writing and in the author’s bona fides, authority builds over time as your brand consistently produces useful content across your category.

You build authority when you:

- Publish reliable content across related topics within the same domain (covering the full evaluation journey)

- Earn citations, brand mentions, or backlinks from credible sources

- Are referenced by industry publications and experts

- Have a presence on social media and other third-party platforms

- Maintain consistent product and category messaging across your content

You’re not going to build authority with a single article. You need sustained, quality content that covers all angles of your topic. Over time, that’s going to signal to readers, search engines, and AI systems that your brand is a well-founded source of information.

Trustworthiness

While expertise and authority show knowledge and depth of insight on a topic, trustworthiness reflects how credible and reliable the content is.

You build trustworthiness through signals like:

- Credible sources and referenced data

- Current, up-to-date information

- Clear caveats and honest discussion of any trade-offs

- Transparent editorial practices

That last point matters more than many brands realize. Trustworthiness also comes from being open about the editorial system behind the content. That can include reviewed-by bylines, fact-checked notes, clear source attribution, transparent AI-use disclosures where relevant, or a broader trust centre.

For example, this chapter was created in close partnership with Skale Content Specialists Bree Recker and Simone Bradley. It went through three writing rounds, three editing rounds, reverts, and QA, as well as fact checking by Skale Organic Growth Lead Kristina Pantelic, who also provided subject matter expert insights.

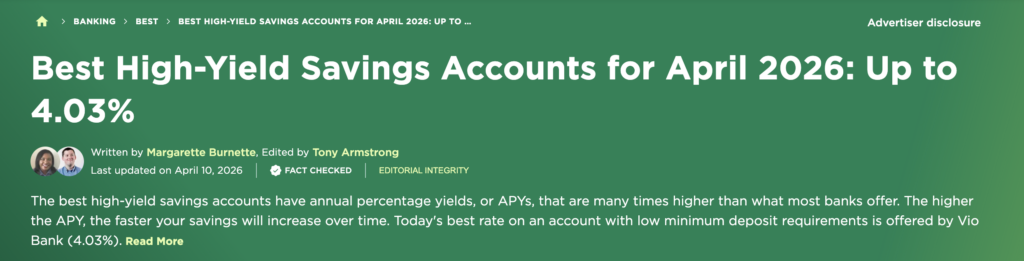

NerdWallet exemplifies the idea of an editorial trust section. Alongside its editorial guidelines, many pages show readers how the content has been reviewed, fact-checked, and maintained.

They have notes on editorial integrity, clearly disclose where they have advertising partnerships in place, and make their list of advertisers available. This makes the editorial process transparent for readers, which is a valuable trust signal.

Quality Assurance

While it’s not officially part of Google’s E-E-A-T guidelines, quality assurance is also a critical component of expertise and an essential trust signal.

Strong content standards reinforce trust by improving factual accuracy, maintaining consistent terminology and brand messaging (also important for AI readiness), and reducing the risk of weak or misleading claims. In more technical or regulated categories, QA should include expert review before publication.

How to Structure Content So AI Systems Can Extract It

Ensuring your content hits all the right E-E-A-T notes is only part of the job. You also need to structure it in a way that makes it easy for AI systems to find, interpret, and reuse accurately.

Humans typically read a page from top to bottom. But AI-driven search systems scan for clear, self-contained sections that answer a question, explain a concept, or compare options in a way that can be extracted without losing meaning.

That’s why a fundamental writing principle, called chunking, has come back with full force. I was first introduced to the idea in digital journalism 101, because it makes content easier for people to scan, process, and recall.

Structural tweaks like chunking make your content easier for AI to parse. But that doesn’t mean you should distort your content for machines. Google’s Danny Sullivan has repeatedly warned against over-optimizing content into tiny fragments just to please LLMs.

At Skale, our view is that chunking and writing for humans aren’t mutually exclusive. When done right, good chunking is simply good content design; it helps readers scan and understand information more easily. Better AI extractability is a nice bonus, too.

So, what does that actually look like in practice? Here are a few ways to structure content so it works better for readers (and AI systems).

Modular Sections

Each section should focus on one idea.

As a rule of thumb, use 150–300 word sections where it makes sense to do so. That’s usually enough space to answer a question, add context, and give a useful example without drifting into multiple ideas at once.

Each block should be readable in isolation.

Answer-First Structure

Start sections with the answer, then expand.

Don’t spend three paragraphs warming up to the point. Lead with the clearest version of the answer or definition, then use the rest of the section to explain how it works, why it matters, or where the trade-offs are.

This keeps the section focused and makes the main point immediately visible.

Question-Led Headings

Think about how your buyers might prompt their questions, and then phrase your headings to match. Avoid vague labels like “Implementation guide” or “Considerations.” Instead, use headings that reflect actual decision points, such as:

- How difficult is CMP implementation?

- What does a CMP cost?

- Which compliance risks does a CMP reduce?

This makes your article more navigable and engaging for human readers, and makes it easier for retrieval systems to match sections to specific user prompts.

Extraction-Friendly Formatting

Some structures are particularly easy for AI systems to reuse:

- TL;DR summaries

- Comparison tables

- Bulleted lists

- Clearly labelled sections

These formats work well because they organize information into self-contained units and answer reader intent.

A comparison table highlights differences immediately. A bulleted TL;DR list summarizes key information.

These structures are inherently good editorial practice, because they improve readability and understanding first and foremost. The fact that they are easy for AI systems to extract is an added bonus.

Platform-Specific Tips for AI Visibility

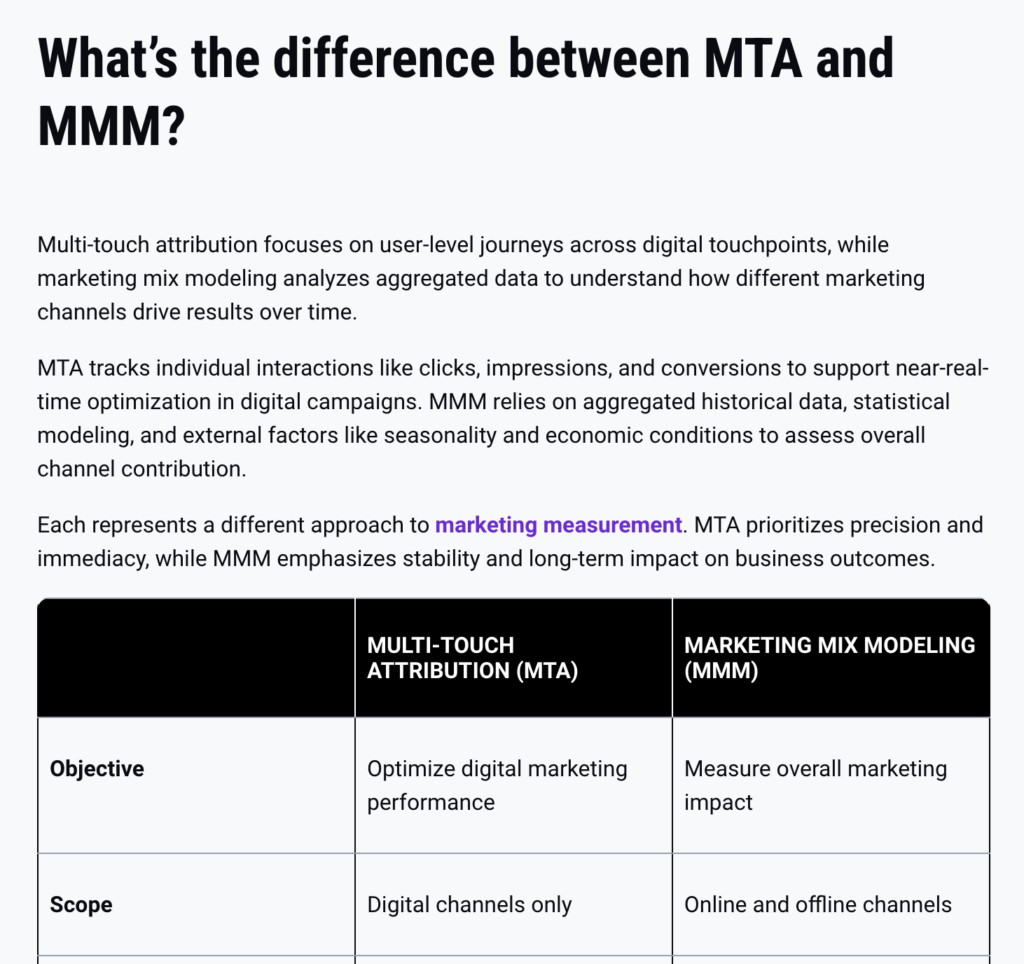

Different AI tools retrieve and cite content differently. That means the same page may perform differently across ChatGPT, Perplexity, Claude, and Gemini.

The good news is that the shared fundamentals remain the same; content that’s clear, structured, and credible performs best across all four.

| Platform | What it tends to favor | What helps most |

| ChatGPT | High E-E-A-T content that answers multiple intent queries | Clear definitions, summaries, strong author credibility |

| Perplexity | Fresh, skimmable content that already performs well on Google | Clear structure, domain authority, and JSON-LD formatting |

| Claude | Recent (from the past month), authoritative sources | Up-to-date, original research, and strong editorial standards |

| Gemini / AI Overviews | Google-indexed content with strong SEO foundations | Schema, hierarchy, page structure, rankings |

ChatGPT

ChatGPT tends to pull from authoritative guides and structured explainers.

Pages that answer multiple intent queries with clear summaries, direct definitions, and strong author credibility signals perform well with this LLM.

If your page helps the system quickly understand “what this is” and “why it matters,” it has a better chance of being reused and cited.

ChatGPT also prioritizes content from domains that have established a strong trust footprint across the internet. Recent research found that sites with over 30,000 referring domains are 3.5x more likely to be cited by the answer engine than those with up to 200 referring domains.

So work towards becoming an authority in your industry that other sites consistently reference and link to.

For a deeper dive, read our article on how to rank in ChatGPT.

Perplexity

Perplexity’s Sonar algorithm favors content that already ranks highly on Google’s SERP. But showing up on page one isn’t enough to get you mentioned in this answer engine.

Fresh, recent pages with a clear structure and well-supported topical clusters, which all happen to be valuable for SEO as well, can help you get mentioned in this LLM.

Format for scannability with short, declarative statements, and use lists, tables, and bullet points where possible to make it easier for Perplexity to surface your insights as a direct answer.

Additionally, use question-based headings and lead with answers to make your content highly scannable.

Beyond that, you want to include structured comparisons and original statistics where possible, optimize your URLs with clean slugs, and implement structured data like JSON-LD markup.

For more tips, check out our guide for ranking your content in Perplexity.

Claude

Claude pulls primarily from authoritative original sources like company blogs, official documentation, peer-reviewed research, and government or institutional publications.

It’s not strongly tied to Google’s rankings, so an article can rank on page one and still not be cited in Claude if it lacks clear sourcing, original insight, or editorial credibility.

Focus on becoming an authority in your space by publishing original data, proprietary research, and well-documented explainers that other credible outlets are likely to reference.

Claude also weighs recency heavily for fast-moving topics. For industries where things change frequently, like data privacy compliance, you should maintain a regularly updated resource hub with clearly dated content.

Also prioritize clarity and specificity over scannability. This answer engine favors content that directly addresses user intent in plain, precise language, and is dense with accurate claims over highly skimmable formatting.

Finally, avoid overly salesy language; Claude deprioritizes content that reads as promotional over informational.

Gemini and AI Overviews

Gemini and AI Overviews are strongly tied to Google’s index.

That means SEO clarity, schema, page hierarchy, and clean structure are still very important. If the page is weak in traditional search terms, its chances of surfacing in Google’s AI experiences are lower too.

Additionally, these answer engines favor multi-media rich content with contextual headings, like a step-by-step guide with annotated images.

On the technical side of things, structured schema like FAQ, How-To, Product, Video, can help signal to Google’s LLMs what kind of content they’re looking at.

To learn more, head to our article on how to rank in Google Gemini.

Make Existing Pages Work Harder

One common mistake I see is brands overlooking their existing content and focusing only on net new content pieces. By improving page structure and clarity, and making targeted edits to refresh content and improve credibility, you can often get results faster than when you start from scratch.

That’s partly because your existing content already has impressions, crawl history, backlinks, historical authority, and some level of search visibility.

Often, a page has value but no longer reflects how the topic is discussed today, or isn’t structured for AI retrieval.

As Kristina told me, “Improving existing content can often deliver more impact than publishing new material. Refreshing content gives brands a chance to improve information density, update entities and concepts, strengthen explanations, and align pages with current search behavior and category language.”

Another really good reason to update existing content is because freshness is an AI visibility factor in its own right. Recent research shows that recently updated content is significantly more likely to be cited by LLMs.

How to Do an On-Page Optimization

I recommend starting with pages that already show signs of traction. The best candidates are often:

- High-impression pages with weak click-through rate (CTR)

- Pages already surfacing in AI answers

- Pages that lead directly into decision-stage content like pricing or demo pages

And in my experience, a refresh doesn’t always call for a full rewrite. In many cases, the biggest gains come from a focused set of improvements, such as:

- Tightening explanations

- Improving article structure

- Adding TL;DRs

- Including internal links

- Updating statistics using credible sources

- Reworking introductions and conclusions

But strong on-page optimization should also go further than page-level refresh. It should help strengthen the surrounding content cluster, close intent gaps, and create clearer paths through the funnel. That means:

- Adding missing topical depth where the cluster is incomplete

- Strengthening internal links between related pages

- Routing readers more clearly toward evaluation-stage pages

This is how we use optimization to connect to commercial goals. Paired with strengthened cluster coverage and improved internal linking, it helps readers move from discovery to evaluation.

That connection between page quality, cluster completion, and down-funnel progression is central to how we use content to drive organic growth. This approach played a big role in helping us increase AI-search traffic 27 percent QoQ, and boost MQLs 48 percent for Usercentrics.

The Takeaway: Quality Content Still Wins

Content continues to drive growth in the AI search era. Discovery turns into evaluation, evaluation supports conversion, and content continues to generate meaningful growth, even as search evolves.

So while AI may be changing where discovery happens, it doesn’t remove the need for evaluation. Buyers still need trustworthy content to understand a category, validate what they’ve been told, compare options, and assess risk before they move forward.

That’s why quality content still matters. The brands most likely to protect traffic and pipeline are the ones creating content that holds up under scrutiny, answers real decision questions, and helps readers take the next step.

The content system behind the Usercentrics results reflects exactly that. It combines cluster planning, subject matter expert-driven production, extractable formatting, and continuous content updates to build content that’s easier for AI systems to extract, and more useful for buyers when they click through.

The next chapter looks at what happens beyond the page — authority, citations, and third-party consensus — and how they shape whether AI systems trust your brand enough to recommend it in the first place.